Parallax Advanced Research Independent Research and Development (IR&D)

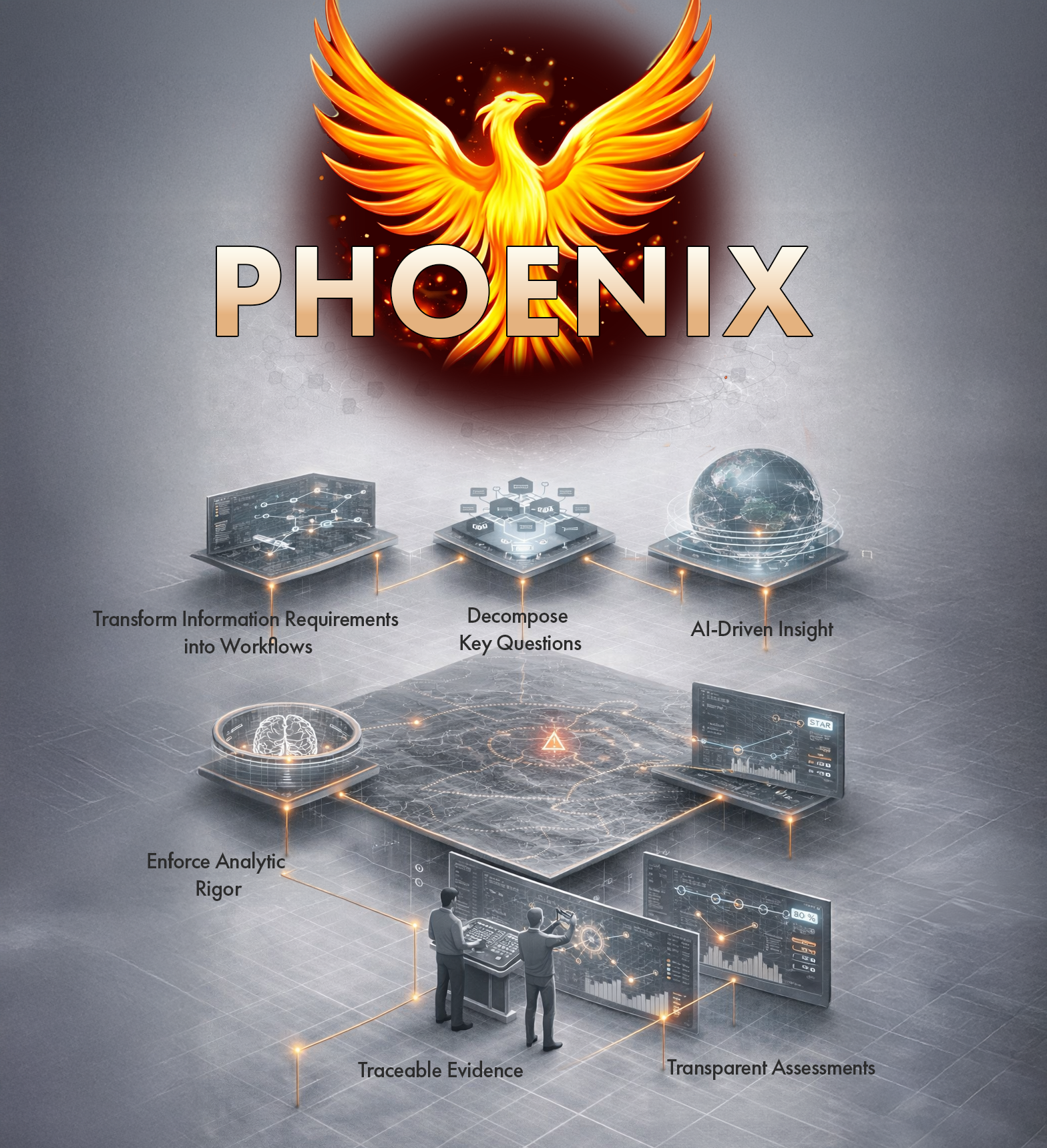

PHOENIX is a Parallax IR&D capability that embeds Large Language Models (LLMs) into analytical workflows to structure and answer operational questions. It converts priority intelligence/information needs into auditable, evidence-based workflows by decomposing leadership intent into essential data points, indicators, and supporting evidence—creating clear traceability from data to decision.

PHOENIX—an AI-enabled analytic platform that integrates Large Language Models (LLMs) into the applied decision analytics workflow to improve how organizations define, structure, and answer complex operational questions.

Who It Serves

PHOENIX supports analytical professionals and requirements managers responsible for developing, decomposing, and managing key information needs across long-term planning (strategic), cross-functional initiatives (operational) and daily operations (tactical) levels. It also serves organizational leadership, planners, and executives who require timely, defensible assessments and the ability to inspect how conclusions were derived.

Why It’s IR&D and Why It Matters

PHOENIX addresses a critical operational gap: while many current tools store requirements and analytical products in disparate, disconnected silos, PHOENIX stands alone as the only platform that actively enforces a disciplined decomposition from executive intent down to collectible, measurable data. The fragmentation in legacy systems creates risk from cognitive bias, incomplete metric identification, limited traceability, and critical knowledge gaps. As IR&D, PHOENIX matures a digitally enforced reasoning process that brings unprecedented analytic rigor, transparency, and repeatability to high-consequence decisions in complex environments.

How It’s Being Researched and Delivered

PHOENIX functions as a software-based analytic augmentation layer for strategic and operational environments, structuring work through the hierarchy: Primary Requirement → Essential Information → Indicator, linking research products directly to the information and indicators they satisfy. The platform incorporates Large Language Model (LLM) support in a Human-on-the-Loop configuration to improve requirement decomposition, identify information gaps, and provide active red-teaming feedback for bias, ambiguity, and logical breaks. It also supports confidence modeling and a feedback loop between analytical producers and requirement originators.

Our Impact

- Strengthens analytic rigor by enforcing structured decomposition from executive intent to measurable, collectible information—making reasoning transparent, inspectable, repeatable, and consistent across teams and time

- Reduces operational risk by mitigating cognitive bias, improving indicator completeness, and increasing analytic consistency across personnel and organizational boundaries

- Improves decision advantage by linking evidence directly to assessments, enabling quantifiable confidence, surfacing intelligence gaps and flawed reasoning earlier, and ensuring defensible, evidence-based decisions

Core Capabilities

- Analytic structuring and requirements management: Transforms high-level requirements into structured, inspectable workflows with clear, evidence-based traceability from data to decision

- SAT integration: Operationalizes Structured Analytic Techniques (SATs) to enforce disciplined decomposition prior to assessment

- AI-assisted requirement decomposition: Uses LLMs to improve question quality, identify missing information elements, and ensure comprehensive indicator coverage

- Active analytic red teaming: Provides real-time detection of bias, ambiguity, and logical gaps in requirement formulation

- Confidence modeling and traceability: Aggregates evidence across indicators to support higher-level assessments, with direct linkage between products, information elements, and indicators

- Feedback-driven refinement: Closes the loop between requirement originators and analysts by validating whether outputs satisfy the original question and diagnosing gaps when they fall short